My Azure Stack HCI Home Lab - Part 4

- Nathan

- May 26, 2024

- 7 min read

Updated: Jan 11

Warnings:

"Azure Stack HCI" has been re-branded/renamed to "Azure Local"

Microsoft requires ECC RAM now. That means, it may not be possible to use the MS-01 workstations to build a cluster anymore. Update: I found a workaround

If you haven't already please check out the other parts of the series first:

Logical Networks

Now that the cluster is built, I'm going to start deploying some workloads onto it. Let's start with deploying an AKS cluster.

Right off the bat, we can see that we haven't met the prerequisites to deploy AKS onto Stack HCI (screenshot 1 above). If we click for more details, we can see that we're missing a Logical Network (screenshot 2 above) which is required for AKS on Stack HCI. More info on Logical Networks can be found here.

So, let's create a Logical Network. There's a few ways you could do this. You could use Windows Admin Center (WAC), connect to your Stack HCI failover cluster, and then create the Logical Network. But, for this article we are going to be creating the Logical Network through the Azure Portal. If you open the Stack HCI cluster in the portal, you can go to Logical Networks and create a new one.

Pick a name to use for your Logical Network. Then, enter the name of the existing Virtual Switch that's used for the compute network in your failover cluster. In my case, the name of the virtual switch is "ConvergedSwitch(compute_management)" but this might be different for you. You can login to one of your nodes and run the following command to find the name of your switch: (Get-VmSwitch -SwitchType External). Finally, you must choose a Region and a Custom Location, but these should both be pre-populated based on your cluster, and you won't be able to change these. This is all shown on screenshot 1 above.

Screenshots 2 and 3 above show you the two available options for your new Logical Network: Static (screenshot 2) or DHCP (screenshot 3). Important: Static is the only option that is supported by AKS on HCI, so you must pick that. For Static networks, you must define an address space in CIDR format. Then, you must create 1 or more pools of IPs from that CIDR range. Warning: don't let your IP Pools use up the entire CIDR range. You must leave some free space for things like the AKS Control Plane IP(s) and Load Balancer IP(s). We will talk about these later.

Kubernetes Clusters on Azure Stack HCI

Now that we have a Logical Network let's create our AKS cluster. In the portal, click on your HCI cluster, go to the section for Kubernetes, and then click Create.

The first tab is shown above. You must type a name for your AKS cluster. You must pick the Custom Location that we created earlier. Then, you must pick which version of Kubernetes to use. It is important to know that the versions available for AKS on Stack HCI are different than the versions available for AKS on Azure. At the time of this writing, the latest version of AKS on HCI was 1.27.3, whereas the latest version of AKS on Azure was 1.29.4. Next, you must decide on the size of your primary node pool. These will be your AKS nodes where you deploy your containers to. These nodes will be created as VMs on your Stack HCI failover cluster. So, make sure to size these nodes accordingly. For example, you don't want to pick a huge node size (say 32 CPUs) if your backend physical nodes only have 16 CPU cores each. Lastly, you can choose to create a new SSH key pair for the cluster, or pick an existing one.

When you run AKS on Azure, then Microsoft hosts the AKS Control Plane for you. However, when you run AKS on Stack HCI, then you must host the AKS Control Plane on your cluster, using a dedicated node pool. Above, you will see the settings available for creating this Control Plane node pool. You can pick the node size to use, as well as how many nodes to create (you can pick 1, 3, or 5). Lastly, at the bottom, you can optionally create extra node pools (like if you needed a Windows node pool).

The next tab, shown above, lets you pick an option for authentication and authorization for your AKS cluster. You can choose to use local accounts with Kubernetes RBAC. Or, you can choose to use Entra ID authentication with Kubernetes RBAC. If you choose the Entra ID option, you must also select an Entra ID group which will be given admin access to the AKS cluster.

The next tab, shown above, is where you configure the network settings. You must pick the Logical Network that we created earlier. Then, you must pick a single IP address that will be used for the cluster's Control Plane. Behind-the-scenes, this IP will be attached to a KubeVIP load balancer, but Azure will take care of managing all of that for you. Important: this IP Address must be from the CIDR range defined on the Logical Network. However, this IP address must NOT be included in any IP Pools that you defined previously. Lastly, you will see that AKS on Stack HCI uses Calico for network policies, and you have no other options available.

The last tab that we will discuss, shown above, gives you an option to enable Container Insights monitoring on your AKS cluster. If you choose to enable it, you must also pick a Log Analytics Workspace where all of the monitoring data will be stored.

And that's pretty much it. From here you can deploy your AKS cluster. Azure will start creating VMs on your Stack HCI failover cluster. There will be 1 VM created per node in your Primary Node Pool. There will also be 1 VM created per node in your Control Plane Node Pool.

The screenshot above shows the nodes in my AKS cluster after a successful deployment. You'll see I chose to go with 1 Control Plane node, and 3 nodes in my Primary Node Pool.

Connecting to your new cluster

You can use the Az CLI to pull down a properly configured 'kubeconfig' file that will allow you to connect to your new cluster. If you're curious about kubectl and kubeconfig files, I write more about them here.

You must first have the Az CLI installed. You must also install the 'aksarc' extension. You can do so with the following command: az extension add --name aksarc

When you're ready, you can run this command to download a kubeconfig file: az aksarc get-credentials --name <myClusterName> --resource-group <myClusterRG>

After that, you should be able to run kubectl commands against your cluster. This assumes that you have proper permissions to the cluster. This also assumes that you can communicate with the private IP address of the control plane. More information can be found here.

MetalLB Load Balancer

If you create a Kubernetes Service of type 'LoadBalancer' then AKS HCI will not know what to do with it, at least by default. The Service will stay in 'Pending' state and an external IP from the Logical Network will never be assigned to it.

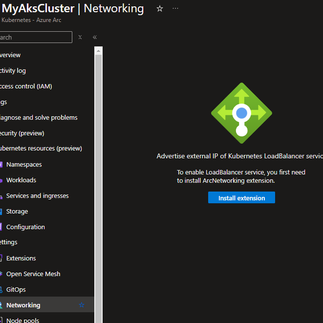

To fix this, we must install the 'ArcNetworking' extension onto our new AKS Cluster. Behind the scenes, this is configuring a MetalLB load balancer. As you can see in screenshot 1 above, you need to go to your AKS cluster, go to Networking, and then click on "Install Extension." After the extension is installed and ready, you can add a new load balancer, and you will be prompted for some settings (screenshot 2).

You must give your new Load Balancer a name. You must also specify an IP range that your Load Balancer will be able to use. Important: this range must be from the CIDR range defined on the Logical Network. However, this range must NOT be included in any IP Pools that you defined previously, and it must not overlap with the Control Plane IP address. If you wanted, you can even just provide a single IP address here. Next, you can specify an optional Service selector. If you want this Load Balancer to only apply to specific Services, then define that here. Otherwise, if you leave this blank, then the Load Balancer will apply to all Services. Finally, you can pick an option for Advertise Mode. I won't go into details on this option, but for more information check this link.

After your Load Balancer is created, then any Kubernetes Services of type 'LoadBalancer' will get an external IP address from the Load Balancer's range.

Final Network Setup

What does our Logical Network look like after we deploy everything? Well, I created a diagram to outline all of the pieces:

1 IP Address will be used for the Control Plane

1 or more IP Addresses will be used by your MetalLB Load Balancers (depends on how many you create / need)

A Pool of IP Addresses, to be used for:

1 IP per node in the Control Plane node pool

1 IP per node in the Primary node pool

1 IP per node in any additional node pools you create

1 IP per AKS cluster needs to be available for upgrade operations

Extra Credit: AKS HCI Bicep Template

As I was working in my lab, I created and destroyed many AKS clusters. I decided I would save myself some time and create a Bicep template to help me out. I've posted the template on my GitHub, you can find a link to it here. The template will deploy both a Logical Network as well as an AKS HCI cluster.

Wrap Up

That's it for this part of the series. In the Part 5, I'm going to try and tackle installing Azure Virtual Desktop onto my HCI cluster. Stay tuned.

Hi Nathan, not sure where you try to connect to the AKS on HCI cluster, from the physical machine of the cluster or from your personal work laptop (outside the cluster). I notice the 'server' in the kubeconfig file is only a private IP of my two-node-hci-cluster. Looks like the AKS on HCI cluster couldn't have a public endpoint like AKS Cluster. Thanks.